Introduction

A Rate limiter service is a system that would help control the rate of requests hitting the end application to which the request is being sent out. A rate limiter could be as simple as DDoS attack protection and as complicated as evaluating user specific criteria before allowing requests to pass through. In this article, we will cover the rate limiter that would primarily be involved in handling the requests and response flowing through it and evaluate the criteria for rate limiting alongside. To start with, we would try to define a full-fledged service that can broadly cover variety of custom rate limiting criteria

Features

Net request-rate control

This feature brings in the ability to control the requests per second reaching the backend. This means the rate limiter needs to perform time-bound calculations to evaluate if the number of requests per second crosses a certain threshold. If it does, the limiter should return a response 429 – Too many requests.

Dynamic rate limiting

Rate limits are generally static for smaller systems. However, for larger systems that scale well, the rate limits are only temporary until the systems scale. Thus, the system should be able to read the metrics around the system and pre-calculate metrics around what should be the request count it can handle.

Bandwidth based control

For systems that are heavy on upload/download traffic, this rate limiter feature can help queue the requests when the amount of data exchange per second crosses a certain threshold.

IP based control

This rate limiting is specifically to prevent DoS attack (not a DDoS). A DoS attack ideally comes from a specific IP trying to brute force into the system. The rate limiter should be able to block such attack while also black listing the IP address for a pre-defined duration

DDoS attack prevention

This attack differs slightly from the above IP based control wherein a DDoS attack comes from multiple IP addresses. Thus, Rate limiter needs to perform constant analysis to find a sudden spike in requests from specific IPs using a 99th Percentile of histogram data. Thereby, it should be able to dynamically block all the IPs that are responsible for a DDoS. This blocking can be short term in order to prevent a false positive from being blocked longer.

User/API key request quota

This feature would allow the rate limiter to read authorization context to understand the end-user details and then bucket the requests to calculate if the request count is crossing the allocated maximum quota or per second request rate. The quota could be configurable and the rate limiter should provide the ability to configure “How to identify users?” and “How to check user quota?”

Route based control

All the above features need to work specific to route prefixes provided by the services. This ensures that the rate limits are applied dynamically as per the service’s internal handling capability.

Observability

The rate limiter should have decent logging and metrics sent out in order to understand the requests getting rejected. The service should also generate logs that allow the developer to understand how much response time is consumed by the rate limiter when validating if the request should pass through.

Configurability

Finally, for a Rate limiter service that provides an exhaustive suite of features, it is important to make it easily configurable from the outside without the need to restart the rate limiting engine itself.

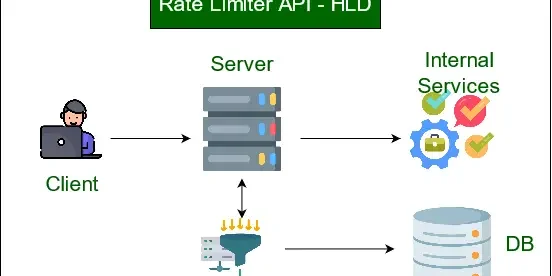

Architecture

Below are the assumptions being made with respect to the deployment of rate limiter service:

- Kubernetes based deployment

- Cluster supports Horizontal pod autoscaling

- Observability tooling exists inside the cluster or the access can be obtained via API key

This is a very high level architecture of the rate limiter service where the Apps can provide inputs related to app specific rate limits configuration while there is global configuration that an admin can feed right at the beginning. Now, let us jump into the key components of the system

Components

Requests Handler

This can be any high-performance reverse-proxy server that is able to scale to millions of requests per second. My choice here would be scalable HAProxy deployment with static backend configuration. HAProxy would in turn send out the requests to the Rate limiter core engine.

Rate limiter Core Engine

This is the key component that handles requests before sending it to the API router. The rate limiter will be running more like a sidecar to the proxy server to ensure that it scales along with the HAProxy servers. Rate limiter will read the configuration present in the config map and reload it when signaled by the Configuration engine. Additionally, it also communicates with the Dynamic rate limit handler that is responsible to provide criteria based evaluation in case needed.

Configuration engine

The configuration engine takes the input from the applications related to rate limits for each URL path. The applications can choose to have a static rate limit by IP/User/Bandwidth/API key OR define a criteria based on count of pods/CPU or Memory utilization. This allows the configuration to be extremely flexible. The configuration engine runs as a separate service to ensure that the workload of changing & validating rules doesn’t land on the Rate limiter itself.

Dynamic Rate limit handler

The Dynamic rate limit handler constantly evaluates the rules defined by the apps using Configuration engine. Based on these rules, dynamic rate limiter maintains a cache for respective URL prefixes regarding whether the URL should be blocked or not. The rate limiter core engine does the job of communicating with Dynamic rate limiter (in case rule for the prefix is dynamic) and then decides if the request is ok to proceed.

Datadog/Prometheus

These are observability tools that provide metrics related to the number of pods running for an application, their resource utilization as well as statistics related to rate limiting.

NoSQL DB

A NoSQL database like MongoDB can be used in order to maintain an audit trail of how many IPs are presently blocked. It will also support querying when request from an IP is repeated. NoSQL database is expected to provide higher throughput for our heavy write usecase compared to a relational database.

Redis cache

The redis cache supports the dynamic rate limiting engine by helping cache the rule evaluations per url prefix. Redis cache is distributed in nature and hence multiple dynamic rate limiter pods share data with each other as well using Redis.

API router

API router is practically the final piece of this rate limiting system and is more like a terminating point for the rate limiting. Once the request is allowed to pass through the core of the system, it reaches the API router and eventually is served after authentication and authorization has been done.

Limitations

The above design handles the majority of the use cases – However, there are some key points to remember when implementing the system.

- Due to eventual consistency in the databases as well as Redis cache, there are chances that an IP would not be immediately blocked. It will take time until the data persists and the respective rate limiter pod picks it up

- With too many custom rate limit rules, the dynamic rate limiter engine could get overwhelmed

Performance

The architecture defined above is highly performant due to the below reasons:

- Each service component autoscales

- The reverse proxy and rate limiter service internally runs as a single unit allowing that to scale effectively while us not having to worry about dynamic configuration

- Redis cache is generally highly performant and allows fetching data much faster than doing the same from a relational database.

Conclusion

A rate limiter is a complex system if you try and make it feature rich. That said, if you are looking to build a very simple rate limiter, you could easily leverage IP based or token based rate limiting using a reverse proxy like NGINX. Rate limiting is definitely an important component for a high-traffic web application in order to protect it from exploitation.